Sam Altman Blasts Anthropic’s Mythos Model as Deceptive ‘Fear-Based Marketing’ in AI Security War

BitcoinWorld

Sam Altman Blasts Anthropic’s Mythos Model as Deceptive ‘Fear-Based Marketing’ in AI Security War

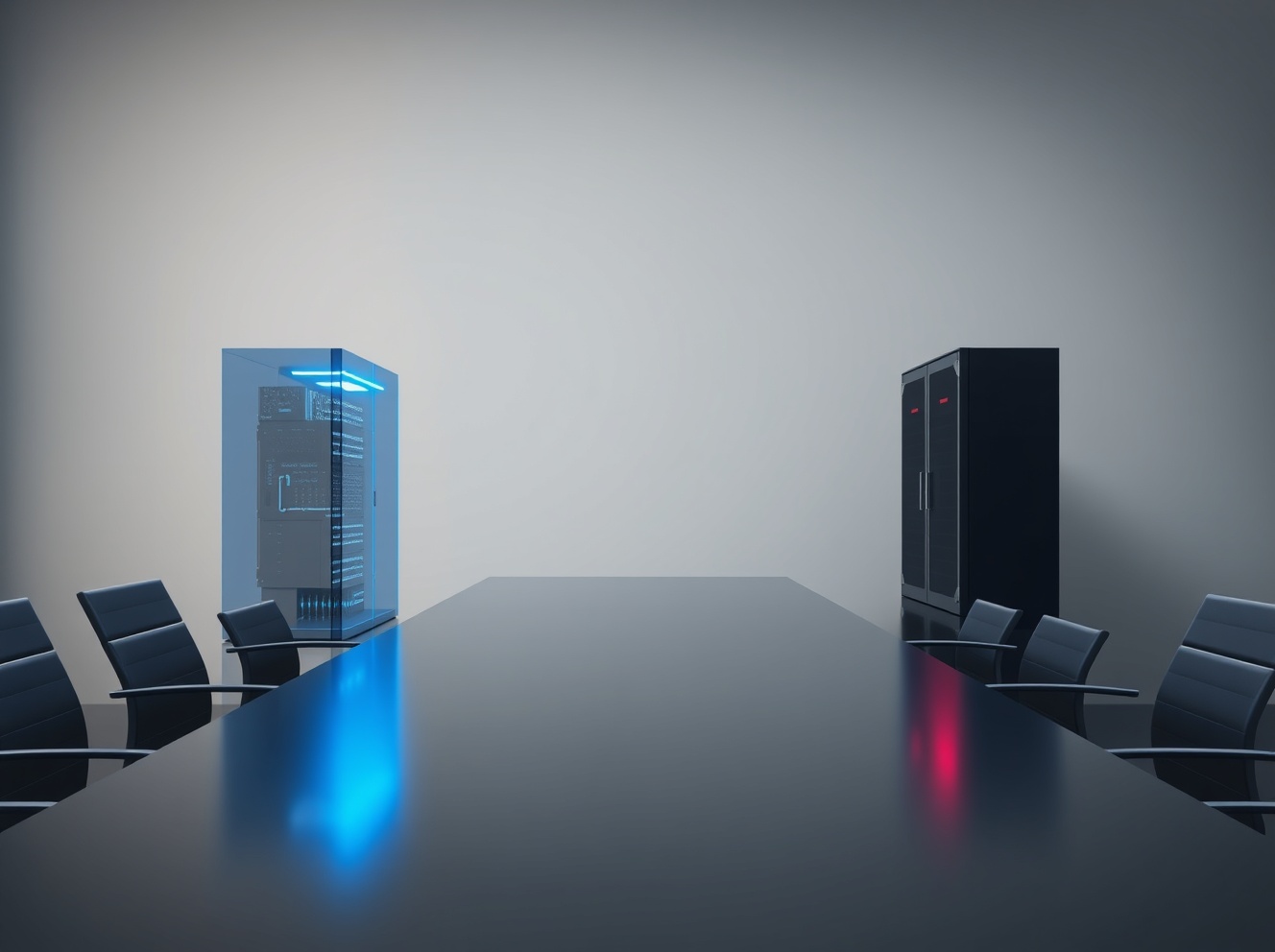

In a striking public critique that has reignited tensions within the artificial intelligence sector, OpenAI CEO Sam Altman has directly challenged the marketing strategy behind rival Anthropic’s new cybersecurity model, Mythos, labeling it as exploitative ‘fear-based marketing.’ This accusation, made during a recent podcast appearance, underscores the deepening philosophical and commercial rift between two of the world’s leading AI labs. The debate centers on fundamental questions of AI safety, public access, and the ethical responsibilities of developers in an increasingly competitive landscape.

Sam Altman Condemns Anthropic’s Rhetoric on Mythos

During an April 21, 2026, appearance on the ‘Core Memory’ podcast, Sam Altman addressed Anthropic’s recent launch of its specialized cybersecurity AI, Mythos. Anthropic had announced the model earlier in the month, releasing it exclusively to a select group of enterprise clients. The company justified this limited release with a stark warning: Mythos was ostensibly too powerful for public access, as malicious actors could potentially weaponize its capabilities. Altman, however, framed this justification not as prudent safety protocol, but as a calculated marketing tactic designed to create artificial scarcity and fear.

“It is clearly incredible marketing to say, ‘We have built a bomb, we are about to drop it on your head. We will sell you a bomb shelter for $100 million,'” Altman stated, employing a vivid metaphor to critique his competitor’s approach. He further suggested this strategy aligns with a broader, longstanding desire among certain factions to restrict advanced AI to a privileged few. “There are people in the world who, for a long time, have wanted to keep AI in the hands of a smaller group of people,” Altman remarked. “You can justify that in a lot of different ways.”

The Launch and Controversy of Anthropic’s Mythos Model

Anthropic, founded by former OpenAI researchers, introduced Mythos as a frontier model specifically engineered for cybersecurity applications. The company’s core claim positioned Mythos as a dual-use technology of exceptional potency. Consequently, Anthropic argued that a broad release posed an unacceptable risk, necessitating a tightly controlled, enterprise-only rollout. This stance immediately drew scrutiny from industry analysts and ethicists.

Critics, including several independent AI safety researchers cited in tech policy journals, have argued that this rhetoric is overblown and may serve multiple purposes:

- Commercial Positioning: Creating an aura of unparalleled power to justify premium pricing for enterprise clients.

- Regulatory Narrative: Shaping the conversation around AI governance to favor controlled development environments.

- Competitive Differentiation: Distinguishing Anthropic’s ‘safety-first’ brand from OpenAI’s more iterative public release strategy.

This incident is not an isolated one but rather the latest episode in an ongoing series of public exchanges between OpenAI and Anthropic. The two organizations, despite sharing common roots, have increasingly diverged in their public messaging and strategic approaches to AI deployment.

A History of Strategic Divergence and Public Sparring

The friction between OpenAI and Anthropic is deeply rooted in their founding principles and subsequent evolution. Anthropic was established with a central focus on AI safety and alignment research, often advocating for more cautious development timelines. OpenAI, while maintaining a safety research division, has generally pursued a more aggressive strategy of iterative public deployment, as seen with ChatGPT and its successors. This fundamental philosophical difference now manifests in public disputes over product launches and marketing.

Industry analysts note that these public spats serve to highlight each company’s unique value proposition to different stakeholders: investors, clients, regulators, and the talent pool. The table below outlines the core contrasting positions evident in the Mythos debate:

| Point of Contention | Anthropic’s Stance (Implied by Mythos Launch) | OpenAI/Altman’s Critique |

|---|---|---|

| Risk Disclosure | Extreme caution; highlight worst-case dual-use potential. | Fear-mongering; using alarmism for commercial gain. |

| Access Model | Restricted, enterprise-only for high-stakes models. | Elitist; concentrates power and stifles broad innovation. |

| Safety Narrative | Safety requires controlled, limited release environments. | Safety is best achieved through transparency and widespread testing. |

The Broader Context of ‘Fear-Based Marketing’ in AI

Altman’s specific critique of Anthropic taps into a wider, longstanding critique of the AI industry’s communication strategies. For years, discussions about artificial general intelligence (AGI) and frontier models have been punctuated by dramatic warnings about existential risk. Notably, these warnings have often originated from the very companies building the technology. This creates a paradoxical situation where the sellers of a product are also its most prominent doomsayers.

Experts in technology ethics from institutions like the Stanford Institute for Human-Centered AI have observed this pattern. They note that hyperbolic risk narratives can achieve several strategic objectives simultaneously:

- They attract media attention and establish a company as a serious player grappling with profound questions.

- They can influence regulatory frameworks, potentially creating barriers to entry for smaller competitors.

- They justify high valuation premiums based on the world-altering potential of the technology.

However, this strategy carries significant reputational risk. As Altman’s comments indicate, competitors can frame this caution as disingenuous marketing. Furthermore, constant doom-laden rhetoric may eventually lead to public cynicism or alarm fatigue, undermining legitimate safety concerns. The challenge for the industry is to articulate real risks responsibly without veering into what critics see as self-serving hyperbole.

Balancing Innovation, Safety, and Public Trust

The Altman-Anthropic dispute ultimately reflects a trilemma facing the entire advanced AI industry: how to balance rapid innovation, responsible safety protocols, and the maintenance of public trust. Anthropic’s approach with Mythos prioritizes a specific interpretation of safety through controlled access. OpenAI’s criticism advocates for a different path that views broad, supervised exposure as a key component of robust safety testing.

This debate occurs against a backdrop of increasing regulatory scrutiny worldwide. Legislative bodies in the United States, European Union, and elsewhere are actively crafting rules for high-risk AI applications. The rhetoric companies use today directly informs the regulatory paradigms of tomorrow. If policymakers perceive the industry as crying wolf for commercial advantage, they may discount genuine warnings. Conversely, if they accept the most alarming risk assessments at face value, they may enact regulations that stifle beneficial innovation.

Conclusion

The public critique by Sam Altman of Anthropic’s Mythos model as ‘fear-based marketing’ is more than a mere corporate rivalry. It represents a critical inflection point in the public discourse surrounding AI development, safety, and commercialization. The exchange forces a necessary examination of how tech leaders communicate risk, justify access restrictions, and compete in a market where perception of capability is as crucial as capability itself. As AI continues its integration into critical domains like cybersecurity, the outcome of this debate between open iteration and controlled deployment will significantly influence not only the competitive landscape but also the societal framework governing this transformative technology. The path forward requires a nuanced, transparent dialogue that separates legitimate safety engineering from strategic market positioning.

FAQs

Q1: What is Anthropic’s Mythos model?

Anthropic’s Mythos is a specialized artificial intelligence model designed for cybersecurity applications. The company claims it is highly powerful and has released it only to a limited cohort of enterprise customers, citing concerns about potential weaponization by malicious actors.

Q2: What exactly did Sam Altman say about Mythos?

During a podcast appearance in April 2026, OpenAI CEO Sam Altman criticized the launch strategy for Mythos, calling it “fear-based marketing.” He suggested Anthropic was using alarmist rhetoric about the model’s danger to make it seem more impressive and to justify restricting its access to a wealthy elite.

Q3: Is ‘fear-based marketing’ common in the AI industry?

Analysts and ethicists note that hyperbolic warnings about AI risks, including existential threats, have been a recurring theme in industry discourse, often promoted by the companies developing the technology. This can serve to attract attention, influence regulation, and heighten the perceived value of proprietary systems.

Q4: What is the history between OpenAI and Anthropic?

Anthropic was founded by former OpenAI research executives. While both companies focus on advanced AI, they have diverged in philosophy, with Anthropic emphasizing a more cautious, safety-centric approach and OpenAI favoring iterative public deployment. This has led to ongoing public disagreements on strategy.

Q5: Why does this debate matter for the future of AI?

This debate matters because it shapes public perception, influences upcoming government regulation, and determines who gets access to powerful AI tools. The conflict between controlled release and open iteration will directly impact how AI safety is managed and how benefits are distributed across society.

This post Sam Altman Blasts Anthropic’s Mythos Model as Deceptive ‘Fear-Based Marketing’ in AI Security War first appeared on BitcoinWorld.

You May Also Like

Meta AI Training Sparks Alarm: Company to Record Employee Keystrokes for Model Development

Kash Patel's lawsuit against legal commentator thrown out